Mayo Clinic Health System, Eau Claire, 2026 Quality

Predictive Modeling for Efficient Patient Selection and Transfer to Critical Access Hospitals

The Right Patient, The Right Place: Smarter Patient Selection and Transfer for Critical Access Hospitals

Imagine a bustling Emergency Department at a large, tertiary care “hub” hospital. The waiting room is full, and highly specialized beds are occupied by patients who are stable but still need care. Meanwhile, just an hour away, a Critical Access Hospital (CAH) in a rural community has open beds and dedicated staff ready to help.

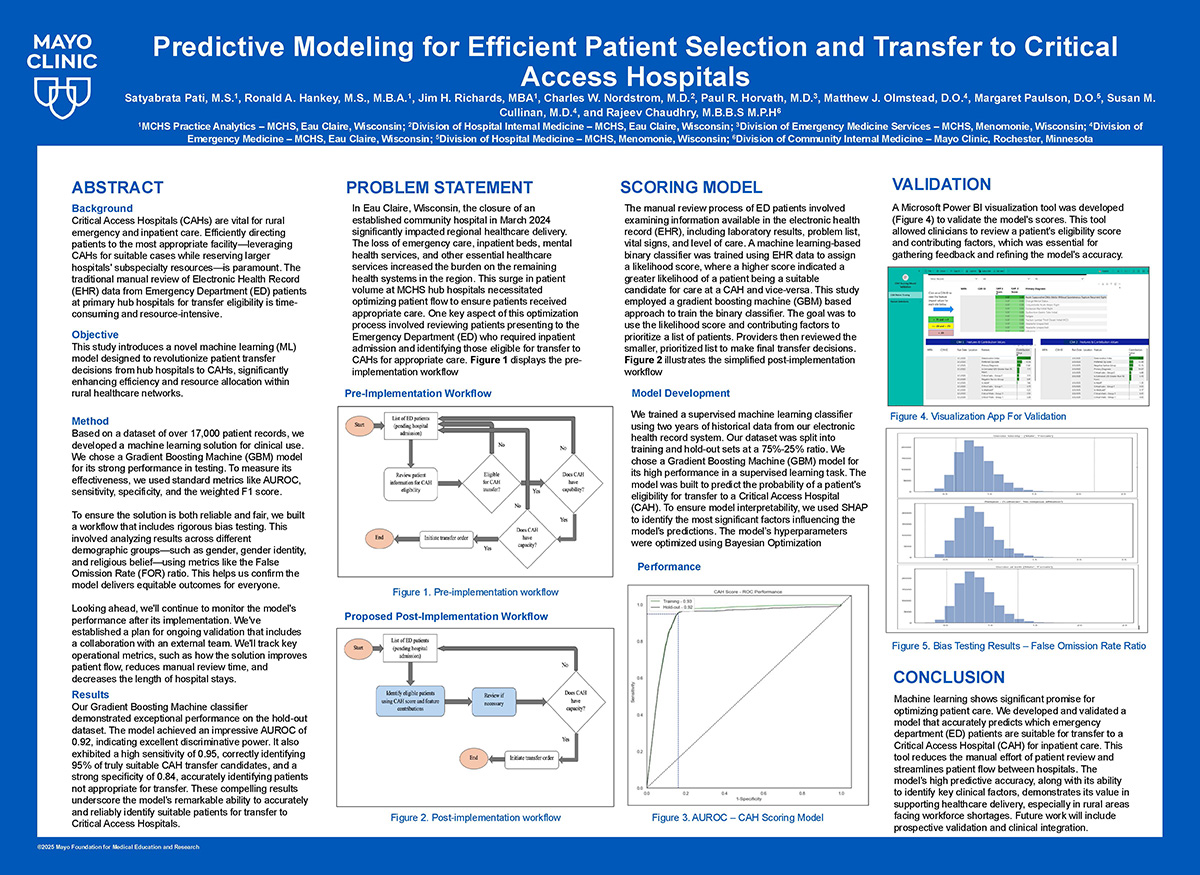

Connecting these two realities-moving stable patients to the rural hospitals to free up space at the hub for complex, high-acuity emergencies-sounds like an obvious solution. However, in practice, it is a persistent operational bottleneck. Identifying which patients are clinically appropriate for a CAH transfer requires doctors and nurses to spend hours manually clicking through Electronic Health Records (EHRs). This time-consuming task drains resources and slows down patient flow, ultimately straining the entire healthcare network.

To solve this, we asked a crucial question: Can we use historical data to automate this process and predict which patients are the best candidates for transfer?

We developed a machine learning decision support system to act as a smart assistant for Emergency Department teams. Using a Gradient Boosting Machine (GBM) — an advanced algorithm excellent at uncovering complex, non-linear patterns — we trained our model on nearly 40,000 past patient encounters. The system learned to recognize the subtle clinical fingerprints of the roughly 30% of patients who were successfully transferred in the past.

When put to the test, the model performed reasonably well against the “test” data. It achieved an accuracy score (AUROC) of 0.93, proving its strong ability to distinguish between patients who should stay at the hub and those who could safely continue their recovery at a CAH.

But in healthcare, accuracy alone is not enough; a tool must also earn the trust of the clinicians using it. Doctors cannot blindly follow a “black box” algorithm. To bridge this gap, we integrated Shapley Additive Explanations (SHAP). Instead of just giving a patient a transfer eligibility score, SHAP acts like a digital colleague, explaining why it made that recommendation. It highlights exactly which vital signs, lab results, or demographics pushed the score up or down, empowering the physicians to make the final, informed call.

Furthermore, we rigorously audited the model to ensure it was safe and fair. Our comprehensive fairness testing evaluated the system’s performance across diverse demographics, including sex assigned at birth, gender identity, and ethnicity. The results confirmed that the AI makes equitable recommendations for all patient subgroups, ensuring that efficiency does not come at the cost of bias.

Today, this tool is not just a theoretical concept; it is actively deployed at select pilot sites. It is already assisting ED physicians in real-time, significantly reducing the manual burden of chart reviews.

Like any good clinician, the tool is also continuously learning. Early in the pilot, we noticed the system sometimes flagged “low acuity” patients who were actually well enough to simply be discharged home within a few hours. We isolated this issue and are now refining the model by feeding it real-time ED triage acuity scores, teaching it to filter out these rapid-discharge cases.

As we look toward the future, we are preparing to expand this technology to more Critical Access Hospitals. By streamlining the triage process, this machine learning system is doing more than just moving data; it is helping optimize hospital capacity, reducing emergency room wait times, and ensuring that every patient receives the right care, in the right place, at the right time.